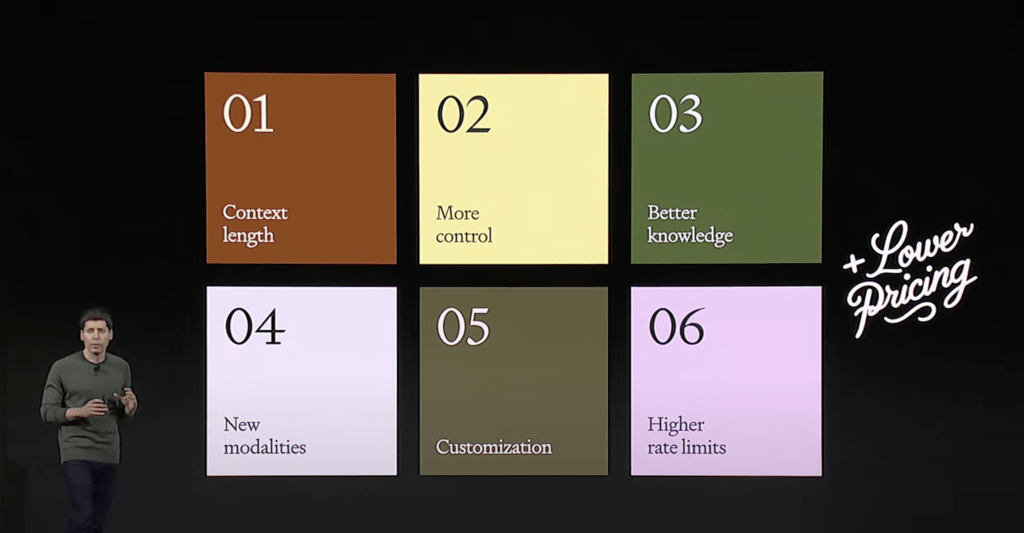

OpenAI has recently released the GPT-4 Turbo model, which is an advanced version of their natural language processing model. OpenAI CEO Sam Altman speaks during the OpenAI DevDay event on November 06, 2023 in San Francisco, California. Altman delivered the keynote address at the first ever Open AI DevDay conference.

New features of GPT-4 Turbo

Here are some key features and details about GPT-4 Turbo:

- It supports a 128K context window, which means it can input up to 300 pages of text at once

- It has knowledge up to April 2023, which makes it more up-to-date and relevant

- It has multimodal capabilities, such as visual, DALL·E 3 and speech synthesis APIs, which allow it to generate and manipulate images, videos and audio

- It allows fine-tuning, which means users can customize the model to their own domains and tasks

- It has doubled the GPT-4 invocation rate limit, which means users can make more requests per second

- It has reduced the price of input and output tokens by 2x and 4x respectively, which makes it more affordable and accessible

The difference between GPT-4 and GPT-4 Turbo

GPT-4 and GPT-4 Turbo are both powerful language models developed by OpenAI, but they have some differences in terms of features, performance, and cost. Here are some of the main differences:

- GPT-4 Turbo has a more updated knowledge base than GPT-4, which means it can answer more relevant and current questions. For example, GPT-4 Turbo has knowledge up to April 2023, while GPT-4 only has knowledge up to January 20221.

- GPT-4 Turbo supports a larger context window than GPT-4, which means it can input and process more text at once. For example, GPT-4 Turbo can handle up to 300 pages of text, while GPT-4 can only handle up to 3,000 words12. This allows GPT-4 Turbo to perform more complex reasoning and summarization tasks.

- GPT-4 Turbo has multimodal capabilities, such as visual, DALL·E 3 and speech synthesis APIs, which allow it to generate and manipulate images, videos and audio12. For example, GPT-4 Turbo can create images from text prompts, convert text to speech with different voices, and analyze data and charts.

- GPT-4 Turbo allows fine-tuning, which means users can customize the model to their own domains and tasks12. For example, users can train GPT-4 Turbo on their own data and use cases, such as creating chatbots, writing essays, or generating code.

- GPT-4 Turbo has a lower cost than GPT-4, which means users can save more money when using the model. For example, GPT-4 Turbo charges 0.03 USD per 1,000 output tokens, while GPT-4 charges 0.06 USD12. This makes GPT-4 Turbo more affordable and accessible for developers and enterprises.

Can I use GPT-4 Turbo in my application?

To use GPT-4 Turbo in your application, you need to have an Azure OpenAI service account and a deployment of the GPT-4 Turbo model. You can follow the steps in this tutorial1 to get started. You can also use the openai Python package to interact with the GPT-4 Turbo model programmatically. You can find some examples of how to use the ChatCompletion API and the Chat Markup Language (ChatML) in this article2.

GPT-4 Turbo is a powerful and versatile language model that can generate and manipulate text, images, videos and audio. You can use it for various tasks such as creating chatbots, writing essays, generating code, and more. 😊

The cost to use GPT-4 Turbo

According to the information I found on the web, the cost of using GPT-4 Turbo depends on the number of input and output tokens. A token is a unit of text, such as a word or a punctuation mark. The price of input tokens is 0.01 USD per 1000 tokens, and the price of output tokens is 0.03 USD per 1000 tokens12.

This means that the more text you input or output, the more you have to pay. For example, if you input 1000 words and output 2000 words, you have to pay 0.01 USD for the input and 0.06 USD for the output, for a total of 0.07 USD. The price of GPT-4 Turbo is lower than the original GPT-4, which charges 0.03 USD per 1000 input tokens and 0.06 USD per 1000 output tokens12. This makes GPT-4 Turbo more affordable and accessible for developers and users. 😊

This latest release from OpenAI demonstrates their ongoing efforts to advance deep learning and provide more powerful and accessible language models for various applications. Developers can take advantage of this model to build applications that require advanced text generation and understanding capabilities.

For more details, you can refer to OpenAI’s official documentationor explore the API for specific use cases and integrations.

I always learn something new from your posts. This one was particularly enlightening.